CTRLsum: Towards Generic, Controllable Text Summarization

Lead Author: Junxian He

The Road to CTRLsum: Promise, Problem, Solution

The promise of text summarization programs: AI reduces reading time

One of the most challenging aspects of modern life is dealing with the mass of information that comes into our daily lives, in both professional and personal settings. Tools that could help us manage this information overload are highly desired.

AI-based text summarization systems have the potential to help us with this time management task by making reading more efficient. Given source documents containing long pieces of text (input), these systems produce concise summaries (output) that convey only the important information, which saves us reading time. Such models have been successfully applied to summarize sports news, meeting notes, patent filing documents, and scientific articles.

The problem: Summaries are too general, not tailored to user interests

Standard text summarization methods tend to treat all readers alike and generate general summaries that broadly cover the entire content of the source document. However, in a practical setting, different users may have unique preferences and expectations of the generated summaries. To increase relevance, such preferences and expectations should be considered by the AI tools when generating summaries.

For example, a basketball fan may just want a short summary of an NBA news article if the teams are not of their interest, but a longer summary otherwise. A fan of actor Lisa Kudrow would be disappointed if the summary of a news article about the TV show Friends only emphasized co-star Jennifer Aniston. A non-technical reader may simply want to know the usage of a patented invention, while a patent attorney will expect its technical details.

Solution: Controllable summarization tailors content to user interests

Controllable summarization models, a subset of automatic summarization methods, focus on tailoring their generated summaries according to the interests explicitly specified by users. This idea (to give readers more of the content they really want to read) motivated us to develop CTRLsum, a generic summarization framework that enables users to control the content of the generated summaries along multiple dimensions. The CTRLsum model conditions summary generation on a set of keywords and the source document, where the keywords serve as a communication interface between users and the model to achieve generic control.

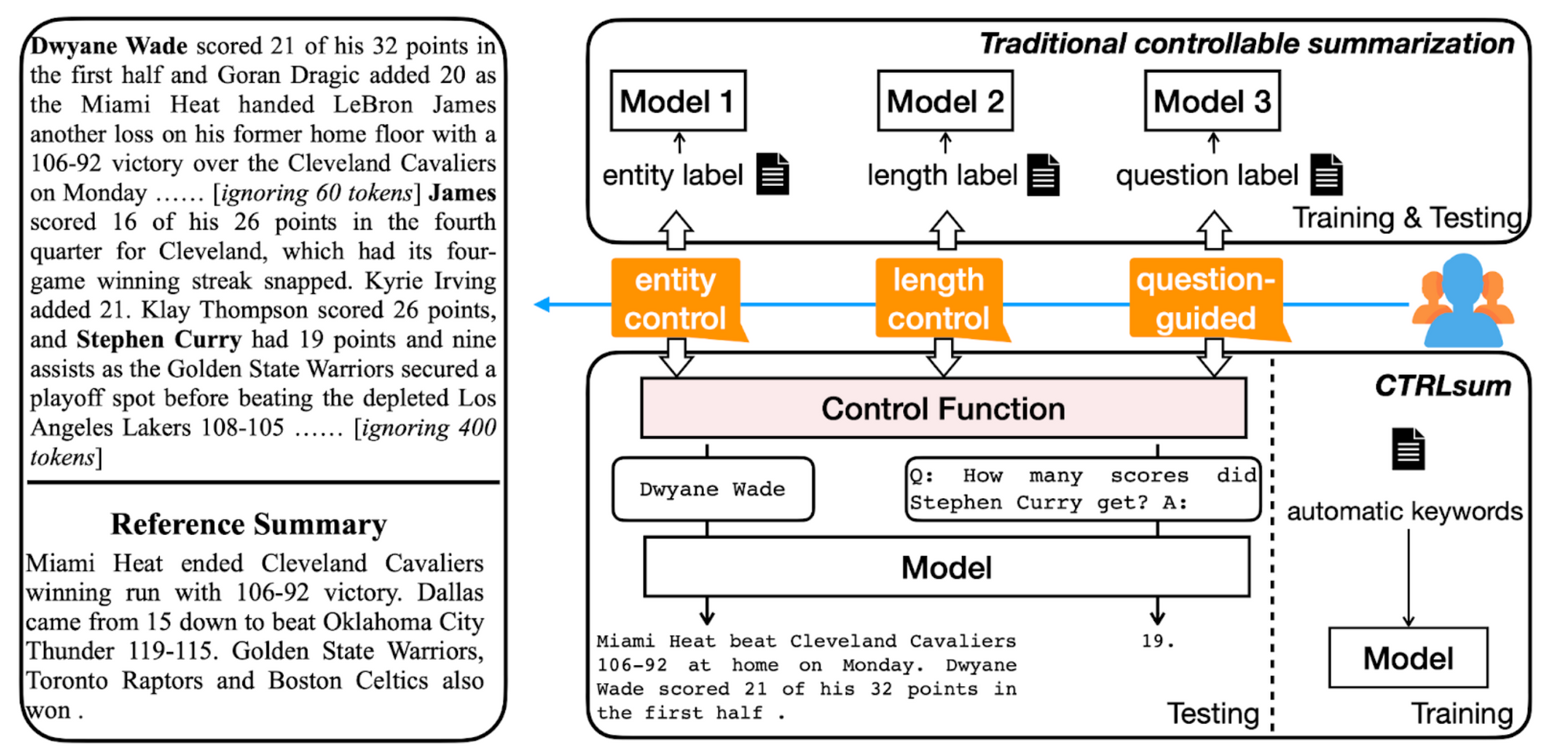

Figure 1. Top Right: Traditional methods, which incorporate the specific control aspect of user interest into the training process, and thus require training a separate model for each aspect. Bottom Right: The proposed CTRLsum framework, where the model training relies on automatic keywords and is separated from the control aspect. At test time, a specially designed control function maps control signals to keywords, and a single trained model achieves controllable summarization along different dimensions.

How CTRLsum Works

Like traditional summarization systems, CTRLsum takes an article as input in order to predict the summary, but unlike those systems, CTRLsum also expects a set of keywords that describe the user’s preferences as additional input. In the training phase, the model learns to tailor the generated summaries according to the provided keywords, thus focusing on different aspects of the source article. The training keywords are extracted automatically from the training source article by utilizing the ground-truth unconstrained summaries (see details in our research paper), without requiring human annotations. These keywords are not constrained to any specific control aspect, such as entity of interest (e.g., person, organization, location) or summary length. Training keywords for the example in Figure 1 would include “Miami Heat,” “Cleveland Cavaliers,” “106-92,” or “Golden State Warriors” (a subset of all the keywords for that example).

This design is key for CTRLsum to control summaries along different dimensions using a single model at inference time. Specifically, we engineer a control function to convert user intent to keywords at inference time to achieve various kinds of summary manipulation. This control function is designed with heuristics and is opaque to users.

For example, in the case of reading an NBA article, a user can specify Dwyane Wade as the player of interest, then the control function in CTRLsum uses "Dwyane Wade" as the keywords to generate summaries focused on this player. A user can also specify the desired summary length, on a scale from 1 to 5; in this case, the control function varies the number of automatic keywords to generate a summary with the desired length. CTRLsum further explores the combination of control keywords and natural language prompts for more flexible control. For instance, a user can ask a question for the summarizer like, "How many points did Stephen Curry get?” The control function then uses the template "Q: How many points did Stephen Curry get? A:" as both the keywords and prompts to yield a question-guided summary. Similarly, in the domain of patents, a user can choose to only summarize the purpose of a patent concisely from a patent filing document; the control function then outputs "The purpose of the patent is" to produce a concise summary focused on patent purpose. Figure 1 demonstrates this workflow.

To use the keywords, we simply concatenate them and the source article together as input without modifying the model architecture. Hence, CTRLsum can be trained on any supervised summarization dataset using any of the neural architectures commonly used in NLP.

Below we discuss a subset of the control aspects explored in the research paper. We refer you to the paper for quantitative results and more control aspects.

Control Aspects

Entity control: At inference time, a user specifies the entity of interest, then the control function in CTRLsum provides the given entity as keywords to generate summaries. Figure 2 demonstrates examples where one entity is used as the keyword (multiple entities are directly supported as well, without any modifications).

Figure 2. Entity-controlled summarization demonstration.

Length control: Users only need to provide the desired length, and the control function selects the corresponding number of keywords obtained from a trained keyword tagger. Intuitively, more keywords usually lead to longer summaries, as shown in Figure 3.

Figure 3. Length-controlled summarization demonstration.

Question-guided summarization: Surprisingly, we found that CTRLsum is able to perform question-guided summarization in a reading comprehension setting, even though it is trained on a summarization dataset without seeing any question-answer pairs. This is shown in Figure 4. Here we use questions as both the keywords and prompts. From the quantitative results in our paper, CTRLsum is able to match early LSTM-based supervised QA results on the NewsQA dataset, despite being trained only on standard summarization datasets.

Figure 4. Question-controlled summarization demonstration.

The Bottom Line

Let's wrap up our discussion of controlled text summarization by, well, summarizing! Here's a quick review, final thoughts, and some useful resources:

-

Information overload is a big problem; example: we all have too much to read.

-

AI-based text summarization systems turn long stories (input) into condensed summaries (output) containing just the essential info, saving us reading time.

-

However, current AI-based text summarization systems yield summaries that are overly general and disconnected from users' preferences and expectations.

-

Personalized text summarization would be preferable, to accommodate different users’ unique expectations and preferences; this is important in practical deployment.

-

To address the limitations of text summarization systems, we developed CTRLsum: a controllable summarization method that tailors summaries to each user, based on their preferences (e.g., the specific person, thing, or subject they want more info about).

-

CTRLsum requires neither human annotations nor pre-defining control aspects for training, yet the system is quite flexible and can achieve a broad scope of text manipulation.

- In contrast, prior work needed to collect annotations for training, and could not generalize easily to unseen control aspects at test time.

-

The demonstrations above are only a small subset of the use cases that CTRLsum can be applied to. We expect that the trained CTRLsum model can be directly used for a broader range of control scenarios without updating the model parameters. All that matters is how to map user intent to the keywords and prompts in the control function.

- For instance, topic-controlled summarization may be achieved by using the keywords in the source article that are related to the given topic, potentially utilizing pre-trained word embeddings or WordNet; using simpler synonyms as keywords may lead to paraphrased summaries that are more appropriate for younger or junior-educated populations.

-

We released the trained CTRLsum weights on several popular summarization datasets, which are also available in the Huggingface model hub.

-

We released a demo to showcase the outputs of CTRLsum along various control dimensions. A third-party demo for users to interact with CTRLsum is also available at Huggingface Spaces.

-

For readers interested in quantitative evaluations of CTRLsum, and experiments we performed, please see the full research paper.

Explore More

Salesforce AI Research invites you to dive deeper into the concepts discussed in this blog post (links below). Connect with us on social media and our website to get regular updates on this and other research projects.

- Read more about CTRLsum in our research paper

- Demos:

- Github: https://github.com/salesforce/ctrl-sum

- Feedback? Questions? Contact Junxian He at junxianh@cs.cmu.edu

- Follow us on Twitter:

- Learn more about the projects we’re working on at our main site:

Related Resources

Read other Salesforce AI Research content covering similar topics:

About the Authors

Junxian He (Lead Author) is a Ph.D. student at Carnegie Mellon University. He works on natural language processing and machine learning, focusing on generative models and natural language generation.

Wojciech Kryściński is a Senior Research Scientist at Salesforce AI Research. He works on problems in Natural Language Processing and focuses on developing new methods, metrics, and datasets for automatic text summarization.

Donald Rose is a Technical Writer at Salesforce AI Research. He works on writing and editing blog posts, video scripts, and media/PR material, as well as helping researchers transform their research into publications suited for a popular (less technical) audience.