Data-Driven, Interpretable, and Robust Policy Design using the AI Economist

This blog accompanies the interactive demo and paper! Dive deeper into the underlying simulation code and simulation card. In this blog post, we focus on the high-level features and ethical considerations of AI policy design.

How can AI Help Inform Real-world Policy Design in the Future?

Optimizing economic and public policy is critical to address socioeconomic issues that affect us all, like equality, productivity, and wellness. However, policy design is challenging in real-world scenarios. Policymakers need to consider multiple objectives, policy levers, and how people might respond to new policies. In our research, we ask:

How can we use AI to design effective and fair policy?

At Salesforce Research, we believe that business is the greatest platform for change. We developed the AI Economist framework to apply AI to economic policy design and use AI to improve social good.

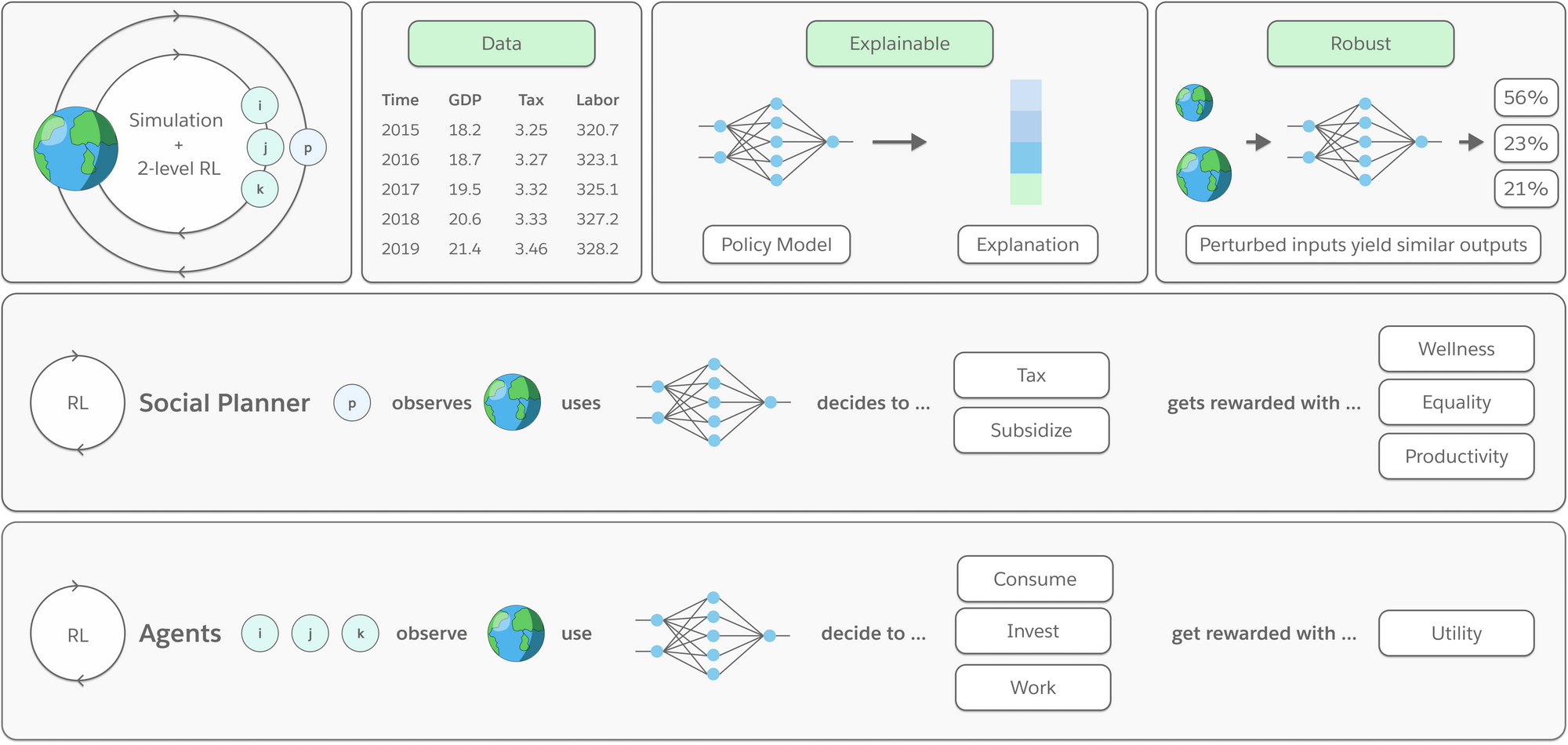

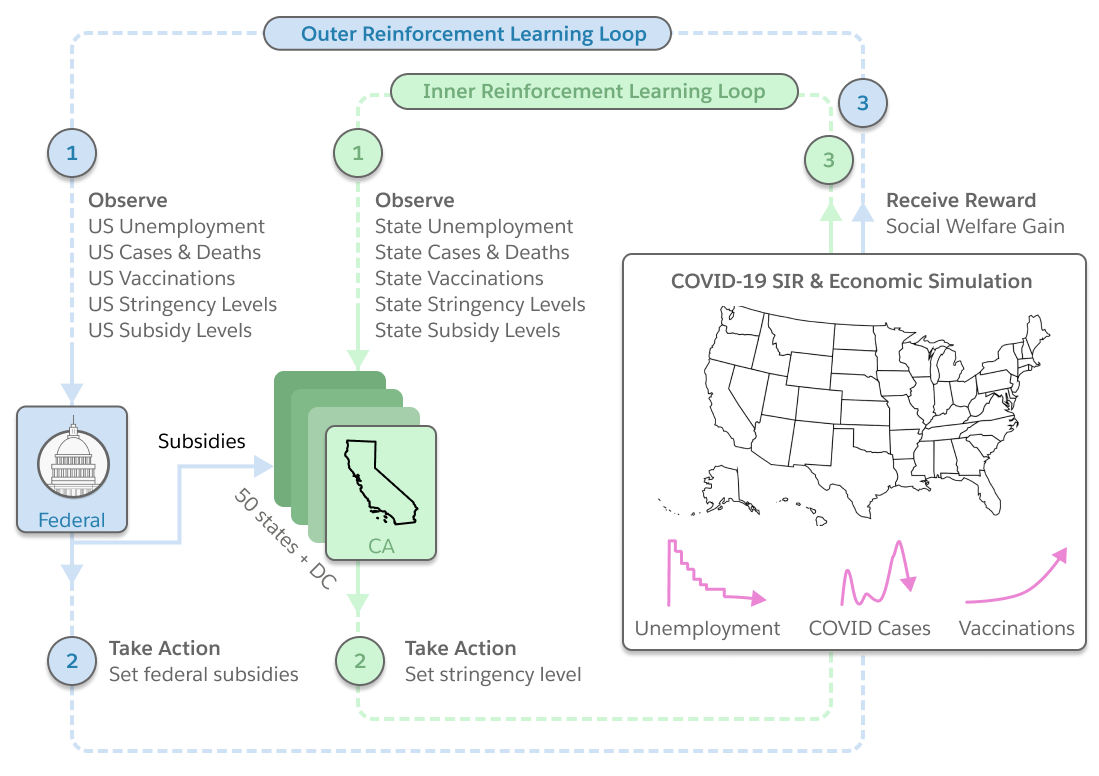

The AI Economist framework designs policies using two-level reinforcement learning and data-driven simulations. We first showed how to learn AI income tax policies that improve equality and productivity (see this blog post).

We’ve worked on extending our framework and applying it to a new use case: responding to pandemics, like COVID-19.

Our project showcases how AI could be used for policy making in the future. This will also require further developing our framework. Key required features and benefits of using AI for policy design include:

- Simulating complex economies. The simulation should model the right economic processes that are relevant to the policy objective.

- Fitting to real data. The simulation should be grounded in real data.

- Using multiple policy levers. The framework can include different types of policy choices, e.g., taxes, subsidies, closures, etc. Reinforcement learning can optimize any type of policy.

- Considering many policy objectives. The policy designer can include any metric of interest into the policy objective, and these do not need to be analytic or differentiable.

- Finding strategic equilibria. Optimal policies should consider how economic agents respond to (changes in) policy.

- Emulating human-like behavior. Economic agents should behave and respond like humans.

- Being robust. The performance of learned policies should be robust to differences between the simulation and the real world.

- Explaining decisions. The causal factors for policy decisions should be explainable.

- Being implementable. The behavior of policies should be simple and consistent enough to be applicable in the world.

Pandemic Response Case Study

In our interactive demo and paper, you can learn how we applied this framework to analyze pandemic response policy.

Ethical Risks and Considerations

This work should be regarded as a proof of concept. There are aspects of the real-world that no AI simulation can capture as of yet. As a result, the simulations proposed here, and the insights that come from them, are not designed to inform, evaluate, or develop real-world policy. All data have their limitations and those used to model complex systems like health and the economy may fail to model impacts on specific segments of the population (e.g. historically marginalized or other vulnerable groups). Our goal is to develop more realistic simulations in the future.

We are very clear that there are known and unknown risks in the publication of research around the use of AI to weigh the policy tradeoffs in potentially sensitive areas like health, employment, education and the environment. In recognition of the potential risks, we commissioned Business for Social Responsibility (BSR) to conduct an ethical and human rights impact assessment of this research before its release.

Some, though not all, of the risks they noted are associated with the use of the simulation beyond its intended purpose and over-reliance on, or overconfidence in, its AI-driven policy recommendations. In BSR’s words, they are as follows:

- Policymakers may use the simulation and/or underlying research to inform decision-making. There is a risk that policymakers may use the simulation directly to test potential policies. Policymakers may also use insights and findings from published reports or press coverage to inform public policy.

- The simulation and research may be used for purposes other than those originally intended. There is a risk that the limitations of the simulation and guidance on appropriate use are misunderstood, misinterpreted, or ignored by readers, leading to inappropriate usage of the simulation for purposes other than intended. This could include using the simulation to create policy responses for future cases not covered by the simulations conducted here.

- Humans may over-rely on insights from the simulation. A simulation provides a veneer of precision and objectivity that imbues a high level of confidence in AI policies. This may result in humans using outputs directly, without external validation or review, and without testing the recommendations in real-world scenarios. Humans often over-rely on AI, sometimes with grave results. This is particularly concerning in the context of a “moral” or “ethical” tradeoff between economic health and public health and safety.

- The simulation may be manipulated to ‘optimize’ for specific policies or outcomes. Policymakers or government actors may use a simulation to justify policy decisions, giving people a false sense of confidence in the policy without insight into the limitations of the simulation. Alternatively, policymakers may use open-source code to develop new models that optimize for their own self-interest, tweaking parameters and outcomes until they achieve the desired outcome.

- The AI Economist open-source code may be altered for use in new geographies or to guide policy responses in future pandemics. If the underlying code and datasets for the AI Economist’s COVID simulation are made publicly available, there is a risk that it could be altered for use in other geographies without the appropriate data inputs, or that it could be used to guide policy making and decisions in future pandemics, without the appropriate data on the disease.

These risks could be associated with the following adverse human rights impacts:

- Right to Equality and Non-Discrimination (UDHR Article 2 / ICCPR Article 2): Failure to disaggregate data by race, gender, age, or other protected categories, limits a simulation’s ability to extrapolate policy impacts on specific groups or populations. If a simulation is used to make policy decisions without taking into consideration policy impacts on specific segments of the population, there is a risk of discrimination and adverse human rights impacts on higher risk populations.

- Right to Life and Right to Health (UDHR Articles 3, 25 / ICCPR Article 6 / ICESCR Article 12): If a policymaker or government actor uses outcomes from a simulation to justify less stringent public health policies, it could lead to severe adverse health impacts, including long term illness or death. Furthermore, if the underlying code is used to create simulations that inform policy decisions in future pandemics, it may lead to similar adverse health impacts, particularly if the new model fails to integrate data sources and context specific to the disease.

- Right to Work and Adequate Standard of Living (UDHR Articles 23, 35 / ICESCR Article 6): Policymakers and government actors may use the model in ways that result in job loss and adverse impacts on the right to work. For example, if a policymaker or government actor uses the simulation to justify stringent public health measures that lead to lockdowns, this could result in significant job loss. These impacts may be particularly severe if the policy response does not include financial support or subsidies for individuals experiencing financial hardship. Violations of these rights may also have knock-on impacts on other human rights.

- Freedom of Movement, Freedom of Assembly, and Access to culture (UDHR Articles 13, 20, 27 / ICCPR Articles 12, 21, 27): In addition to the rights above, stringent public health policies, including lockdowns, will impact individuals’ freedom of movement, freedom of assembly, and access to cultural life of the community.

- Right to Education, Healthcare, and other Public Services (UDHR Articles 26, 25, 21b / ICESCR Articles 13, 15): Depending on the context, public health policies may also impact access to education, healthcare, or other public services.

These adverse impacts are more likely to affect at-risk and vulnerable populations with less access to resources that would allow them to sustain themselves through job loss, economic shutdowns, and health complications.

To mitigate these risks to the extent possible, we have taken the following steps:

- Limiting the public web demo to insights. The public website does not include any interactive elements, which allows individuals to optimize for variables or tradeoffs, so as to limit its potential use as a policy-making tool. Instead, it includes a summary of research findings and accompanying visuals.

- Gating the code. Before gaining access to the simulation code, we have required an individual to provide their name, email address, affiliation and intended use of the code. In addition, we are asking users to attest to a Code of Conduct in its use. By doing so, we hope to significantly mitigate risks of misuse, abuse, or use beyond originally intended purposes, while still enabling the sharing of research to advance the field and providing the opportunity for replicability.

- Detailing limitations. We have made every effort to be as transparent and clear as possible about the limitations of this simulation and the datasets which inform it. Our disclaimers include the intended use of the simulation, its limitations in modeling real-world effects, and the lack of disaggregated datasets.

- Creating a simulation card. We have published a simulation card that provides a description of the COVID simulation’s data sources and inputs, limitations, and presents basic performance metrics. It also provides 1) a concise overview of the simulation and accompanying research, its key audience, and intended use cases, 2) a description of the limitations and assumptions (both explicit and implicit) of the model, 3) clear definitions of the variables and descriptions of what the variables mean, as well as 4) clear communications on how it works. We hope that “model cards” and “simulation cards” of this nature become a standardized practice across technology companies and research institutions.

We expect and hope to engage users of the AI Economist research, simulation, and underlying code to understand how it is being used and expanded upon outside of Salesforce. We approach this work with humility and hope to hear from the scientific community about its limitations and applications.

About the Authors

Alex Trott is a Senior Research Scientist at Salesforce Research, which he joined after earning his PhD in Neuroscience at Harvard University (2016). His research reflects a passion for making sense of complex systems — most recently through the application of multi-agent reinforcement learning. Alex has received several awards recognizing his work in Neuroscience and, in his time at Salesforce, has earned media coverage through his contributions to the AI Economist team.

Sunil Srinivasa is a Research Engineer at Salesforce Research, leading the engineering efforts on the AI Economist team. He is broadly interested in machine learning, with a focus on deep reinforcement learning. He is currently working on building and scaling multi-agent reinforcement learning systems. Previously, he has had over a decade of experience in the industry taking on data science and applied machine learning roles. Sunil holds a Ph.D. in Electrical engineering (2011) from the University of Notre Dame.

Stephan Zheng – www.stephanzheng.com – is a Lead Research Scientist and heads the AI Economist team at Salesforce Research. He works on using deep reinforcement learning and economic simulations to design economic policy – media coverage includes the Financial Times, Axios, Forbes, Zeit, Volkskrant, MIT Tech Review, and others. He holds a Ph.D. in Physics from Caltech (2018) and interned with Google Research and Google Brain. Before machine learning, he studied mathematics and theoretical physics at the University of Cambridge, Harvard University, and Utrecht University. He received the Lorenz graduation prize from the Royal Netherlands Academy of Arts and Sciences for his thesis on exotic dualities in topological string theory and received the Dutch Huygens scholarship twice.

Acknowledgements

This blog is based on a research project conducted and authored by Alex Trott*, Sunil Srinivasa*, Douwe van der Wal, Sebastien Haneuse, and Stephan Zheng* (* = equal contribution).

We thank Yoav Schlesinger and Kathy Baxter for the ethical review.

We thank Michael Jones and Silvio Savarese for their support.

We thank Lav Varshney, Andre Esteva, Caiming Xiong, Michael Correll, Dan Crorey, Sarah Battersby, Ana Crisan, Maureen Stone for valuable discussions.

Find Out More

- Check out the interactive demo.

- Check out our code and simulation card on Github.

- Check out the technical paper.

- Follow us on Twitter: @SalesforceResearch, @Salesforce.

- Check out www.einstein.ai and learn more about what Salesforce AI Research is working on!

- Let us know how to extend the simulation and policy model!