If You Can Say It, You Can Do It: The Age of Conversational AI

Einstein Copilot has arrived! Find out more about the conversational AI for CRM here.

Imagine you find yourself in the cockpit of a next-generation spacecraft—the kind that can get you from low Earth orbit to the Kuiper belt without breaking a sweat. How do you envision controlling it? Science fiction has conditioned us to equate futuristic technology with dazzling complexity, so you might picture rows and rows of blinking lights, shiny buttons, and screens packed with glowing numbers and undulating sinusoids. It would certainly look impressive. But is this really the mark of an advanced technology?

Instead, imagine a cockpit that’s all but empty, trading wall-to-wall controls for a stunning, panoramic view. You take a seat in the captain’s chair, drink in the scenery for another moment or two, then simply say, out loud, “Take me to Saturn!” No buttons, no switches, no calculating trajectories. You don’t even need some special code or syntax. Just the natural language you use every day.

Imagine further that the ship responds in kind, instantly—with a voice of its own. “Destination set to Saturn,” it says, its tone rising and falling with lifelike inflection. “Would you prefer the fastest route? Alternatively, we can harness the gravitation of nearby bodies to reduce fuel consumption, but the trip will be 17% slower. And there’s always the scenic route. It’s the longest and least efficient, but we’ll get a clear view of the Stickney crater—the largest on Phobos—and fly by Jupiter’s Great Red Spot!”

After considering your options, your response is as effortless as your initial command, spoken in the same plain style. “I think I’ll go with the scenic route.” (As long as we’re imagining, we might as well have fun with it.) That’s it. Your duties as captain are fulfilled.

“Scenic route confirmed,” the ship replies. “Buckle up!”

A brief history of our relationship with tools

A spacecraft operated by natural language is a big deal no matter how you look at it, but it’s an especially ambitious dream given our history as toolmakers—one that stretches so far back that we, homo sapiens, actually inherited the notion from our predecessors. Evidence from a period of time known as the Lower Paleolithic, some 2.6 million years ago, suggest that early humans had a natural affinity for smashing rocks until they fractured, turning clumps of carbon into sharp-edged cutting implements. It’s a category of artifact archeologists call “Mode I” stone tools: hand-held, abundant, and applicable to a wide range of tasks. It was, in essence, Earth’s first technology.

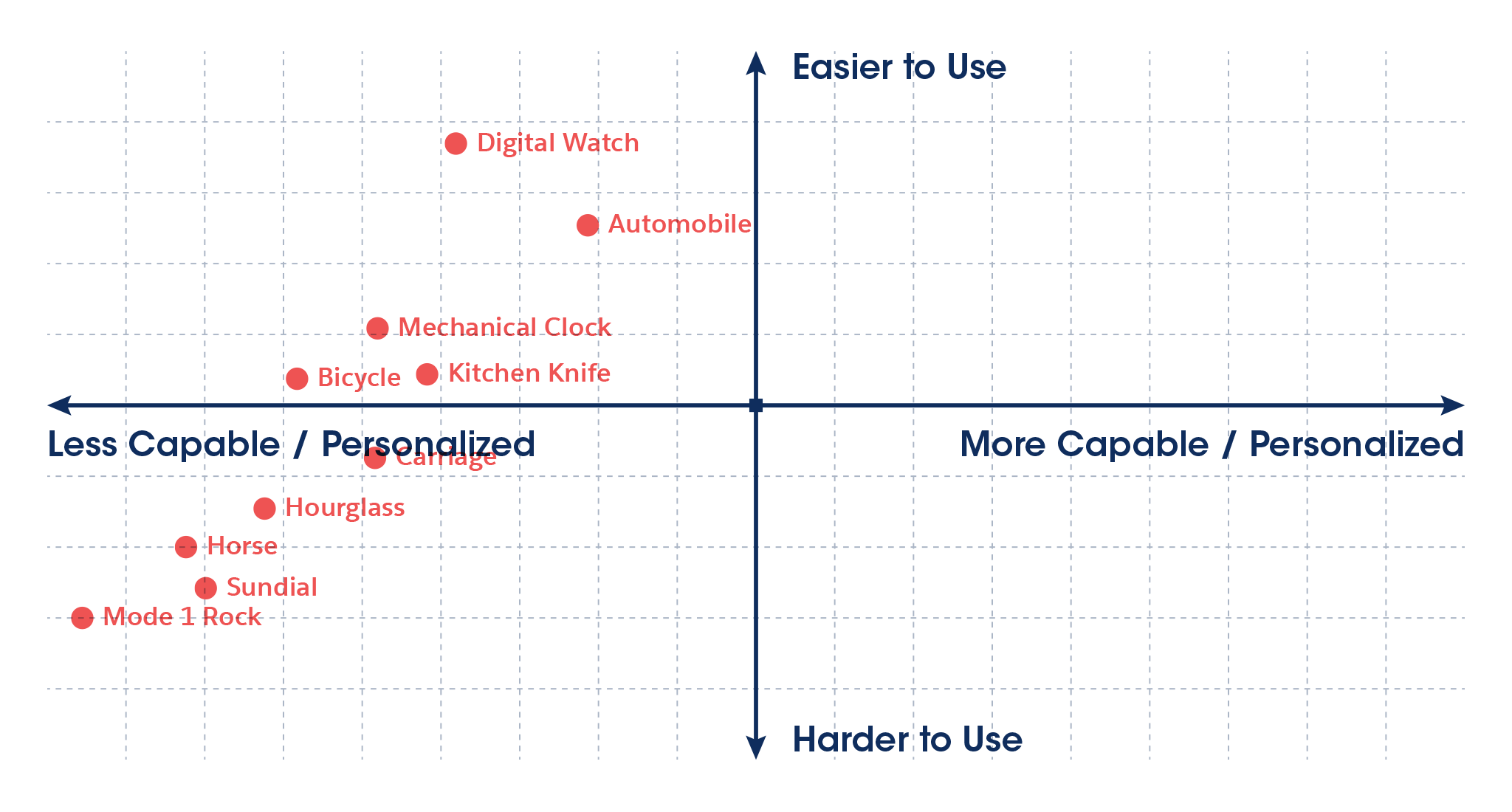

We’ve been a busy species in the millennia since, extending our natural abilities with artificial help in countless ways. The Mode I rock, for instance, was the first in a long lineage of stone tools, but eventually gave way to the sophisticated successors we know today. What makes this history especially interesting, however, is that our tools don’t just grow in their power and range—they tend to become easier to use as well.

Granted, a rock isn’t exactly complex to begin with, but the modern knife is a lot more user friendly—not just because its blade is sharper, but because it’s equipped with a handle that improves leverage and safety. The same is true of vehicles, which prioritize advances in speed and range as much as ergonomics and driver comfort. Even timekeeping has evolved from mechanisms of sand, water, and gears (and all the headaches that entails) to digital watches that deliver millisecond accuracy without maintenance.

Each of these examples are a testament to a profound idea: that the best tools aren’t just powerful and easy to use, but powerful because they’re easy to use.

But the story doesn’t end there. As the information age began to take shape in the 20th century, an entirely new category of tools emerged, leveraging abstract capabilities like computation and the manipulation of symbolic data. In a matter of decades, digital technology transformed the world as dramatically as anything in the preceding millennia, enabling capabilities that would seem miraculous to even our recent ancestors. Those advances, however, have come at a price: today, our tools have never demanded more of us.

A world growing too complicated to comprehend

As an example of how radically our relationship with technology has changed, consider the evolution of graphic design. Like most artforms, it was a purely analog practice for much of its history; the talent required to do good work might take years to develop, but the tools used to express that talent were tactile and intuitive. Designers made extensive use of pencils, pens, knives, adhesives, and stencils, all of which could be understood at a glance, and were often mastered in childhood. Even the more sophisticated gear, like typesetting machines and cameras, might require a bit of training or practice, but they were generally built on straightforward principles.

Today, however, simply learning to operate the software that has become standard across the design industry requires an unprecedented investment of time and effort. Although the designers of yesteryear would find their capabilities almost magical, their complexity is such that beginners often resort to doorstop-sized manuals, classes, and hours and hours of tutorial videos just to get started. How else can one make sense of the icons, menus, palettes, and keyboard shortcuts that make up their interfaces?

In response, a parallel market has emerged for simpler alternatives that feature gentler learning curves. But these friendlier apps demonstrate an unfortunate tradeoff: as accessibility rises, capabilities tend to fall. So while they may be easier to use, they’re less flexible, produce results of a lower quality, and are generally unsuitable for professionals.

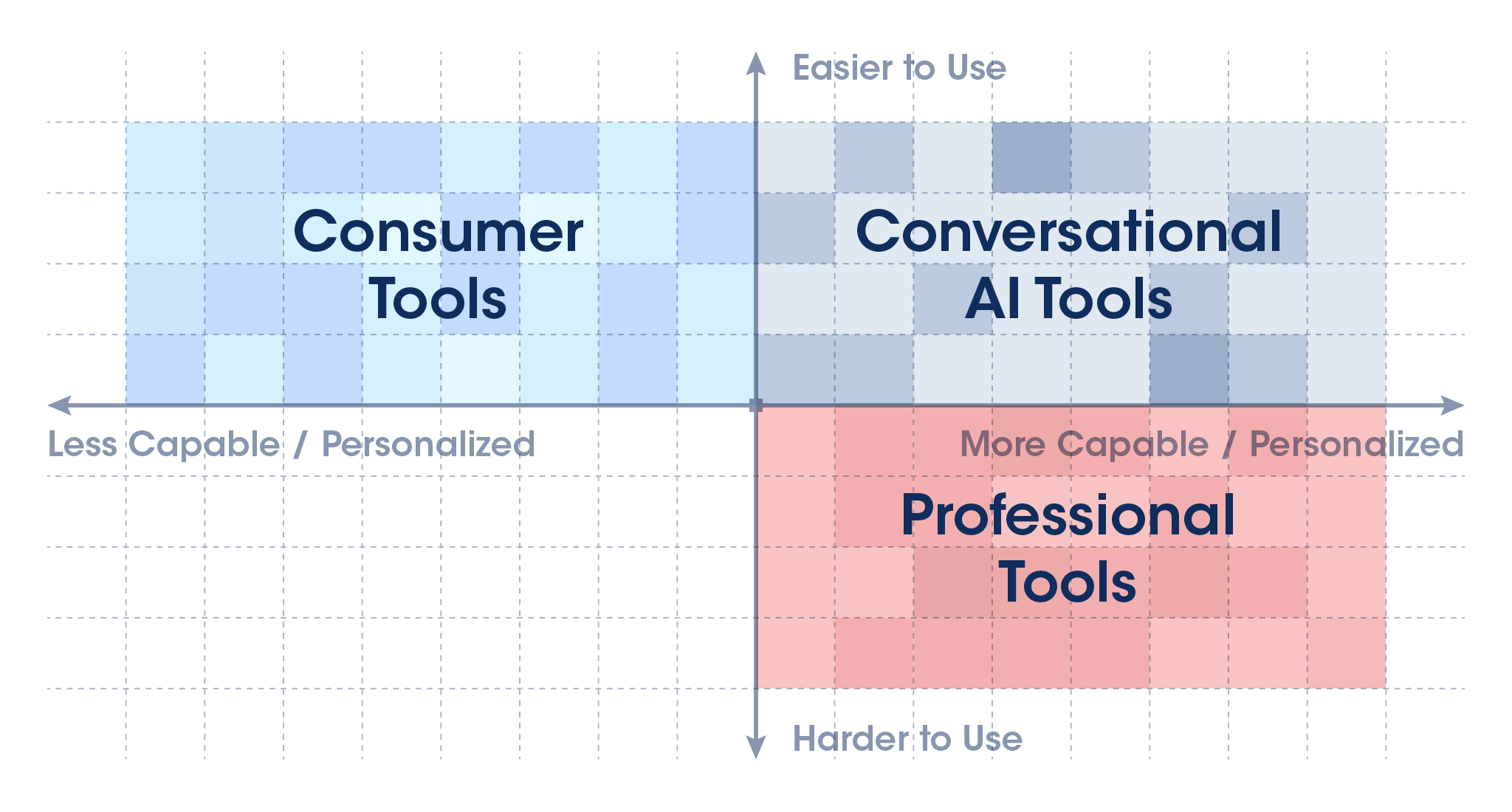

We can visualize this tradeoff as a two-dimensional graph in which the X-axis represents capability and flexibility while the Y-axis represents ease of use. Instantly, we can see that professional tools gravitate towards the lower right, where ease of use is often low while capability and flexibility are high; in contrast, tools aimed at novices tend to be found in the opposite direction (towards the upper left).

The pattern itself is clear: today’s most powerful tools are also the most difficult to use. What’s less obvious is how we should interpret it. Is complexity some inescapable byproduct of the modern world? Or can it be avoided with a fresh approach? Either way, as demands for our time and attention continue to rise—with no end in sight—something’s gotta give.

Consider the following trends:

- Information overload. The sheer quantity of content competing for our attention—books, social media, the news, podcasts, movies and TV, educational materials, and so much more—is spiraling out of control. Few of us have anywhere near enough time to consume everything we’d like, in both our personal and professional lives.

- Increasing workloads. As industries of all kinds face tightening budgets and mounting competition—sometimes from advances in technology itself—even highly-trained experts are feeling pressured to do more with less.

- Trapped potential. Meanwhile, a vast world of hidden talent—and value—may be hiding within our colleagues. How many of our peers have ideas worth contributing, whether creative, technical, or strategic, but lack the expertise to express them using traditional tools?

- The future of work. Finally, an uncertain horizon looms as the nature of our work changes, and virtually all of us can expect at least some disruption in the coming decade. But today’s tools are so specialized that even moderate career transitions can incur an unrealistic burden of upskilling and retraining.

These are deep-seated problems we can’t expect to solve easily. But if it were possible to revive the spirit that characterized so much of our technology’s history—when increases in sophistication made our tools easier to use, rather than harder—I’m confident we could put a major dent in all of them. That’s why I believe the time has come for a fundamentally new way to interact with our tools.

A radically new paradigm for getting things done

What about conversation?

It may seem mundane, but conversation is among our most powerful and versatile skills. One might even call it a kind of universal interface for human collaboration: a single mode of expression that allows us to plan finances with an accountant, discuss medical treatment with a doctor, catch up on life with an old friend, or simply place a lunch order. It demonstrates an astonishing degree of flexibility, and stands in envious contrast to the complexity of today’s digital interfaces—not to mention their learning curves.

Of course, the elegance of conversation tends to break down when computers get involved; they might run circles around us when it comes to speed, memory, and networking, but they're uniquely bad at decoding the way we communicate. Ironically, the very lack of structure that makes conversation so accessible to us is the reason machines struggle to understand it. Even today, with voice-based interfaces rapidly advancing and growing in popularity, viral videos abound of smartphones and home assistants confounded by the ambiguity of natural language, often to comical extremes. But what if that changed? It’s hard to appreciate just how profound a shift in experience a truly conversational interface would represent, so let’s get our creative juices flowing by envisioning what it might be like, step by step.

First, as is often the case with conversations between people, most tasks would begin with an initial statement or request—a description of what the user wants, whether the goal is creating content, consuming information, or even developing a new piece of software.

For example, let’s imagine a marketing professional kicking off a new project with a design tool powered operated purely through conversation:

“I want a banner ad layout with a dark blue background, our company logo in the corner, and our latest tagline written next to a photo of a forest at sunrise.”

Note the casual, everyday quality of the language. It’s more or less identical to an email one might write to a coworker. And it’s just as versatile; in fact, here’s how this same interface might be involved in automating an executive’s daily news consumption:

“Read the top stories on Forbes, Fortune, and the Wall Street Journal over the last week and let me know if any companies in the biotech space have announced an IPO.”

Simple, huh? No new syntax or structure required. It’s amazing how little has to change in a technical sense to switch gears to an entirely different kind of task in an entirely different industry. So let’s push the envelope further, and think about how this workflow might translate to a simple software development project:

“Create an input form entitled ‘suggestion box’ with two text entry fields: one for the user’s first name, and the other for a suggestion, with a maximum of 480 characters. A submit button should then send its contents to suggestions@salesforce.com.”

It’s worth stopping for a moment to consider the sheer depth of information even simple phrases like these convey. In only a sentence or two, an entire project has been kicked off—a new idea established from scratch—with its details ready to be refined. No clicking, no dragging, no hierarchy of menus, and no scouring the internet for tutorials.

But even this is just a single approach. Conversations don’t always begin with such elaborate statements, after all, and some of the best creative starting points refer to something that already exists. Let’s imagine how this could apply to our marketing example:

“I want to create a banner that looks like this, but with the logo and tagline replaced with our own:” <Paired with an image.>

Notice that like so many conversations, the meaning is spread across both words and something nonverbal—in this case, images, logos, copy, and the like. A truly fluent conversational partner will understand all of it—and not just in isolation, but integrated into a single, connected space of ideas.

This is a radical new paradigm indeed. But although it’s worlds apart from the way we currently interact with our tools, it’s all built on three simple ideas:

- Conversation is a deceptively powerful thing, allowing us to easily describe—and invoke—complex tasks.

- Although jargon may change from one field to the next, the fundamentals of conversation are universal. As a single mode of expression applicable to virtually any goal, it’s inherently accessible.

- The way we converse is often multimodal, in the sense that we pair our words with sights, sounds, and other references to external, nonverbal things.

If the experience ended here, it would already represent a seismic disruption to the way we work. Even if this hypothetical tool could go no further than this single step, such a powerful form of delegation—a first draft created through natural language alone—could boost productivity across the board and lighten just about everyone’s cognitive load. But the real trick is what comes next: when a monologue becomes a dialogue, and evolves over time.

The power of a fully conversational workflow

Conversations are exchanges in which information flows in both directions. Over the course of multiple interactions, a single, persistent idea can evolve from a hazy sketch to a finished product. At each step—whether statements, questions, or answers—details are added, ambiguities are resolved, and the ultimate picture becomes clearer.

Thus, the true power of a conversational interface will emerge when it can respond with proposals: entire courses of action the user can accept, reject, or modify, which, in turn, spurs more conversation. In this sense, the AI is more like a partner than a tool: creative, resourceful, and committed to helping solve a problem. Let’s return to our biotech executive from the previous example, and imagine how her conversational AI might respond:

“I’ve read the articles you requested. No companies have announced an IPO, but three have announced new rounds of funding, and two have announced FDA approval for novel therapies. Would you like to know more?”

Notice how this single response doesn’t just answer the initial question, but puts entirely new options on the table, ready for the user to explore. Other times, the response might involve a medium other than language, as in the case of our marketing professional:. Here, the AI may respond with a draft layout, and offer the opportunity to request modifications, or even present multiple layouts and give the user a simple choice:

“Do you prefer option A or B?”

Sometimes, the AI will need to disambiguate the user’s request before proceeding, as we might imagine in our software development example:

“What should the subject line in the suggestion box email say?”

From here, the conversation continues, back and forth, until the desired result is reached.

Now, let’s put it all together and imagine an entire conversation, from start to finish, in each of our three example scenarios:

- Creative:

- User: “Make the slogan bigger, and find me a different picture. One with a setting sun in the background.”

- AI: [Presents revised draft]

- User: “Good, but split the slogan across two lines.”

- AI: [Presents revised draft]

- User: “Perfect!”

- Informational:

- AI: “No companies have announced an IPO, but three have announced new rounds of funding, and two have announced FDA approval for novel therapies. Would you like to know more?”

- User: “I don’t need to know the IPOs, but which companies were granted FDA approval?”

- AI: “Acme Biotech, Inc. and Pacific Coast Pharmaceuticals.”

- User: “What does Acme’s therapy do?”

- AI: “Lowers blood pressure.”

- User: “Interesting. Summarize all of this and add it to my notes for tomorrow’s all-hands meeting.”

- Development:

- AI: “What should the subject line in the suggestion box email say?”

- User: “Oh, right. Let’s go with ‘Incoming suggestion from’ followed by the user’s first name.”

- AI: [Compiles and runs code for user.]

- User: “Send a copy of the email to the user as well, with the subject line ‘Your suggestion has been submitted.’”

By extending our workflow from a single prompt to an ongoing, back-and-forth dialogue, we’ve turned an already powerful idea into something truly revelatory—a tool that could serve as a single interface to just about any task. And it only took a couple more basic ideas to sketch it out:

- Conversations are punctuated by proposals—moments in which the tool presents an idea or plan of action that the user can accept, reject, or modify through a counterproposal.

- The flow of conversation is open-ended and iterative, with ideas taking shape over the course of multiple exchanges, often involving trial and error, until the needs of the user are met.

This, in a nutshell, is our vision for the future of AI. It’s a technology that doesn’t just offer revolutionary capabilities, but promises to transform the way we experience them. In fact, I believe AI stands to reverse the tradeoff we’ve all learned to live with: that high quality results must be associated with a complex, labor-intensive workflow. AI—especially conversational AI—is about turning a tradeoff into a win-win for the first time.

In fact, if we imagine the two-dimensional graph from earlier, we can see that AI promises a chance to move into an as yet unexplored quadrant: the upper-right, where ease of use and capability are both high.

So how do we get there?

Given its vastness and sensitivity to nuance, it’s no surprise that conversational interactions have eluded machines for so long. Nevertheless, the field of Natural Language Processing, or NLP, has made the analytical understanding of conversation one of its primary missions. It’s a pursuit that has unfolded over the course of generations, driven by the efforts of an entire community of researchers, and our work benefits enormously from theirs—specifically, a rigorous, scientific understanding of concepts like the following:

- Natural language. The free-form nature of everyday speech, including ambiguous or even incorrect grammar, implied meaning, and slang.

- Persistent state. An ongoing recollection of the conversation’s history, and the many forms of shorthand with which it’s invoked. For instance, an idea initially given explicit mention may be subsequently referred to as “it” or “that.”

- Comfort with ambiguities. The ability to identify statements or questions that don’t make sense, make educated guesses to fill in blanks, and, when necessary, ask for more information.

- Domain expertise. The jargon, practices, and expectations inherent to a specific field, such as medicine, software development, or marketing.

Although these capabilities tend to come naturally as humans, each represents decades of work for AI researchers, and remains far from solved. But even incremental progress can deliver meaningful benefits in our quest to enable natural, language-driven workflows. In fact, recent advances are making true, human-like interactions possible in a way never before imagined, with many exciting examples suggesting this technology may soon be within reach.

The breakthrough power of foundation models

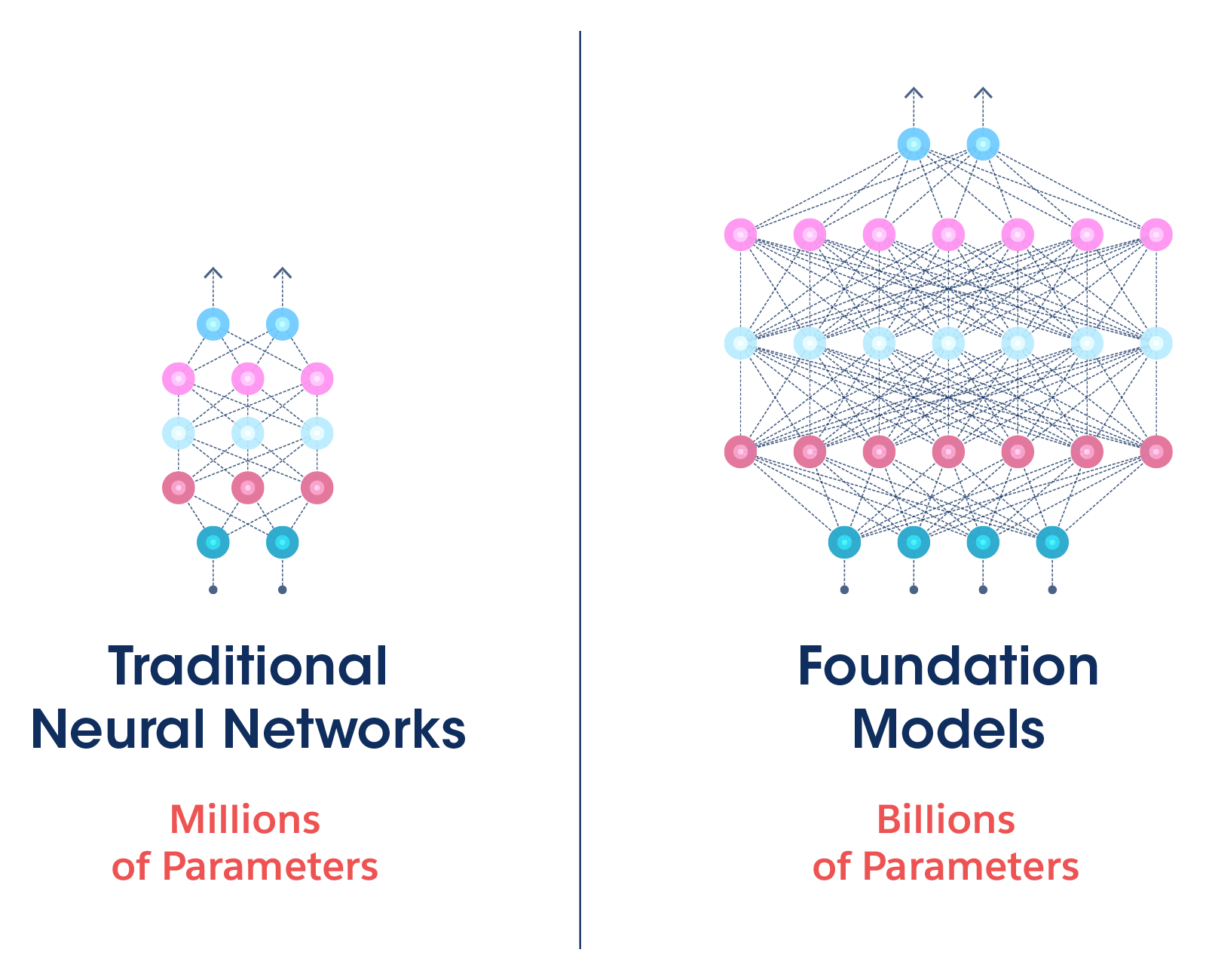

Among the central developments enabling this technology are large language models (LLMs), also known as foundation models. These large-scale neural networks are similar in concept to the ones that have grown in popularity over the last decade for their ability to recognize objects in images, translate language, and even synthesize realistic voices. But they differ in a few key aspects that vastly expand their potential.

First, they’re massive. Some of the largest examples feature hundreds of billions of parameters—the tiny, interconnected decision-making elements that collectively give rise to their capabilities—representing an order-of-magnitude increase over their predecessors. This boost provides the capacity necessary to consume corpora of training data inconceivable in previous years, including volumes of text measured in the tens of terabytes.

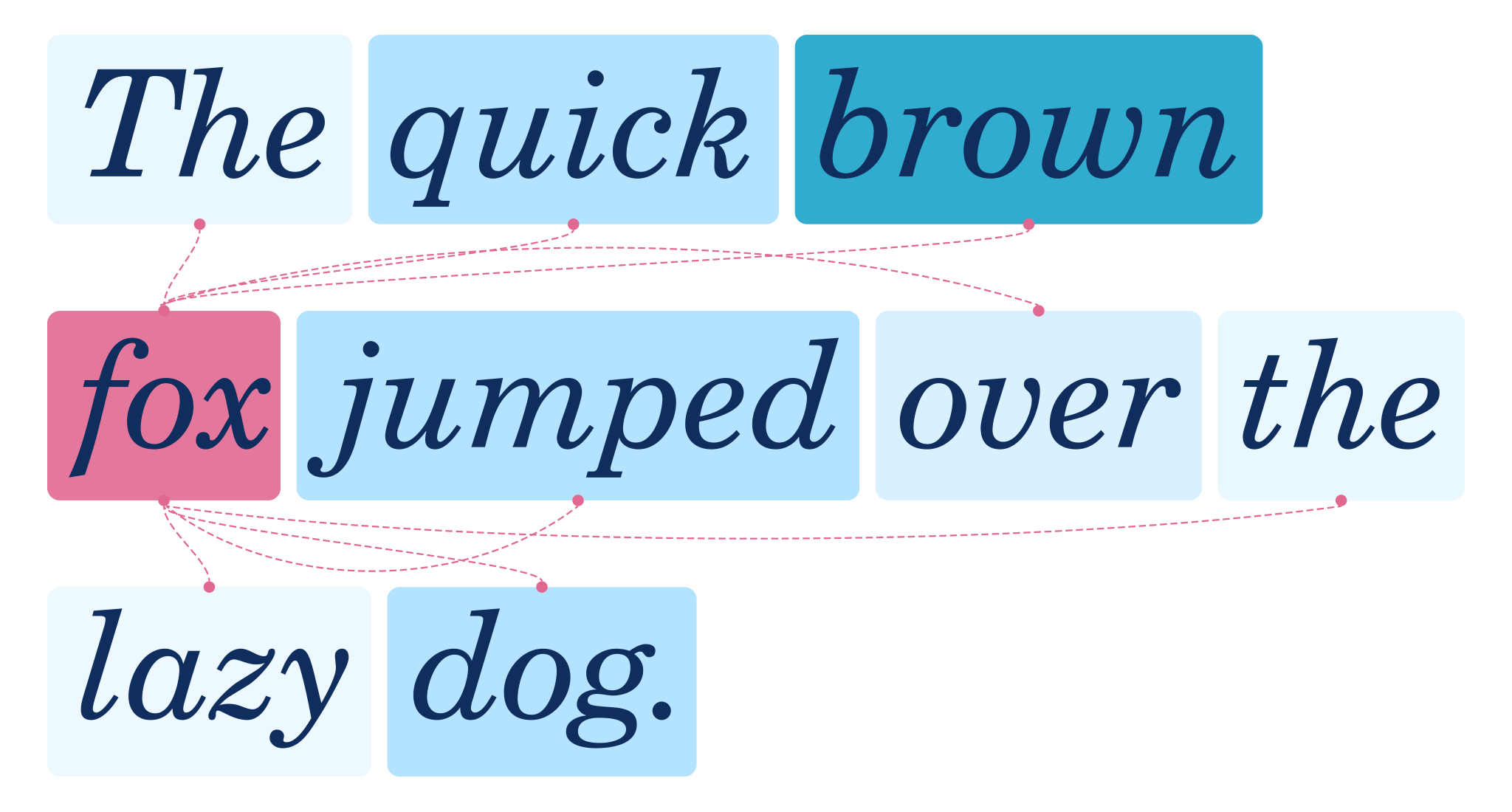

But the magic really begins with the way they put that scale to use. Foundation models are characterized by the unprecedented breadth with which they study their training data—identifying relationships between words, for instance, from the overt to the subtle, across large volumes of text. In contrast to previous networks, which might lose focus before reaching the end of a sentence, foundation models can infer the importance of one word in relation to another across entire paragraphs, or even pages.

Even more potent is their ability to train themselves, obviating the need for human-curated datasets and sidestepping one of the biggest bottlenecks in machine learning. Foundation models make heavy use of techniques like the cloze test, in which each word in a sentence is masked before being read, giving the model a chance to leverage its growing understanding of textual relationships to make educated guesses. Over time, it learns that the missing word in a sentence like “it’s getting <blank> outside,” for example, is far more likely to be “hot” or “cold” than “television” or “blueberry.” Since the training data provides both the question and the answer, no manual administration is necessary, freeing the model to learn, autonomously, at blistering speeds.

These qualities combine to enable training sessions that truly boggle the mind—scrutinizing, for instance, every word of every article on Wikipedia, or even Common Crawl, a text-based repository of the entire public internet. And that’s when something remarkable happens: these enormous, hyper-attentive, prodigiously trained models develop a knack for language never before seen in machines. They can compose human-like works of expression, whether they’re finishing sentences or writing entire articles. They can read documents and answer questions about their contents with arresting insight. Some can even explain jokes.

For all these reasons, foundation models are an encouraging step towards the conversational interfaces we’ve been dreaming of. But even at their best, they’re only a start. Despite their often uncanny way with words, much of what makes conversation so powerful remains beyond their grasp.

Beyond foundation models: The open questions of conversational AI

In the years ahead, our research will explore the capabilities that lie beyond even the biggest foundation models, many of which cut to the very core of our notions of intelligence. Let’s talk about a few.

Knowledge representation

Despite the vast diversity of training data to which foundation models are exposed—often featuring extensive coverage of art, science, literature, politics, history, and more—it’s generally agreed that they lack a conceptual awareness of the underlying subject matter, and that even their most impressive feats of expression are, in essence, a kind of statistical mimicry. It’s how such a model might correctly answer a question like “who played bass in The Beatles?”—leveraging an elaborate network of interrelated probabilities to correctly generate the words “Paul” and “McCartney”—without truly understanding notions like 20th century pop culture, rock instrumentation, or even music itself.

How AI will overcome this limitation is among the greatest open questions in the field, and the value of answering it is hard to overstate. It just might mean the difference between models that can simply react—albeit with an often striking depth—and models that can genuinely reason. Such an AI would understand the concepts behind the words, just like we do, unlocking far deeper and more perceptive conversational capabilities.

Few-shot learning

Cracking the problem of knowledge representation would likely unlock a constellation of related advances, with few-shot learning among the most useful. While modern AI is capable of amazing things, it often takes a burdensome volume of training data to get there—a hurdle of curation that can’t generally be overcome without significant budgets, resources, and expertise. This is a serious barrier to entry for even simple AI tasks, and can be an all-out dealbreaker for applications based on events that are rare by nature—sometimes mercifully so—such as predicting novel causes of automotive accidents.

A model capable of few-shot learning would be intelligent enough to derive the principles at work with just a handful of examples, the way a human being might, and thus generalize what it learns without requiring hundreds, thousands, or even millions of additional instances to connect the dots by brute force. It’d make virtually every task faster, cheaper, and more efficient to learn, while enabling many as-yet impossible applications.

Transfer learning

On a related note, a better grasp of the fundamentals of knowledge would enable models to apply their experience in one domain to others as well—a currently cutting-edge topic known as transfer learning. Near-term applications may be incremental, such as a robot gracefully translating a routine learned in one factory to another, perhaps with a different floor plan, but in the limit, machines may one day embrace our knack for metaphor and analogy altogether, and all that entails. Consider a student applying a time management technique learned in business school while preparing a holiday dinner under pressure (perhaps without even realizing it), a math teacher making references to pie slices to teach a lesson on fractions, or even a composer relating a rhythm to an animal’s footsteps or the notes of a chord to the tones of a sunset. Whether poetic or literal, our ability to invoke what we’ve learned in one aspect of life to another—often on the fly—is a defining feature of human intellect.

Active learning

Conversational AI will ideally engage in active learning as well: recognizing its own gaps in awareness, and knowing how to ask the user for the information needed to close them. It’s a habit far beyond most of today’s machine learning models, which have a tendency to respond to any query with an unexamined confidence that’s all too often undeserved. Tomorrow’s AI, in contrast, must assume a posture of humility, sensitive to the boundaries of its knowledge and eager to expand them. It’s a virtue that will help make systems safer and more transparent, all while encouraging them to grow in more diverse and organic ways.

Multimodal expression

For all our talk of language, it’s important to remember how much of a conversation’s meaning lies beyond the words themselves. Picture, for example, a brainstorming session between athletic shoe designers seated around a collage spread across a table—photographs, sketches, and anything else that might inspire an idea. Taken on its own, the conversation’s transcript might make mysterious references to “the stripes on this one” or “the laces on that one” that make little sense. When combined with the visuals, however, the ideas at play are brought to life by combining the richness of one medium with specificity of the other. Granted, accounting for such non-textual content broadens the challenge of conversational AI considerably—in this case, it would entail understanding images as fluently as language, as well as how the two relate—but its implications for workflows in any number of domains will surely make the effort worthwhile. Imagine how much time our shoe designers might save by showing a picture of last year’s model to an AI-powered drafting tool, saying “let’s start with this,” and describing how they’d like it to evolve.

Common sense

Finally, these techniques may all play a role in solving one of the oldest problems in the history of AI: the acquisition of common sense. Although taken for granted, almost by definition, common sense refers to the body of knowledge we typically assume our fellow humans possess; a web of unwritten rules and inexpressible expectations, rarely acknowledged but essential for making sense of the world. Although lacking a rigorous definition or structure, and so vastly distributed across domains that it defies quantification, common sense is so fundamental to human reasoning that it’s hard to imagine getting through the day without it. For instance, when asking an assistant to help schedule an all-hands meeting, it’s usually not necessary to stipulate that it shouldn’t take place at midnight, or on a Sunday, or during the Super Bowl.

Such instincts are still routinely beyond the reach of even today’s most advanced AI. Consider how easily voice assistants are triggered by accident, for instance, failing to recognize that the user probably didn’t mean for their “Hair Metal Hits of the 80’s” playlist to start blaring during a candlelit dinner. Such lapses are an annoyance today, but as the role of AI grows, the stakes will be raised as well. Imagine asking an email assistant to organize your inbox only to find it achieved its goal—technically—by deleting every unread message, or working with a design tool that doesn’t understand the difference between inspiration and plagiarism. Examples like these remind us that although common sense may seem trivial, its absence can be devastating.

Real-world applications: Conversational AI in practice

It’s clear we have a long road ahead of us, but, I’m excited to report that Salesforce Research has multiple projects in development that suggest the era of conversational workflows is closer than it might seem. We’re exploring a range of applications, from the creative to the technical, from time savers to game changers, and one of my favorites is a tool called CodeGen.

CodeGen is a fundamentally new way to develop software. Rather than attempt to program directly, the user simply describes the problem they’d like to solve in plain language, then sits back as the necessary code is generated—hence the name—automatically. It not only promises to save time for experienced developers, but lower an age-old barrier to entry by allowing non-developers to create applications of their own as well. In other words, it’s the user’s ideas that count—not their ability to implement them.

And it’s an object lesson in bringing conversational AI to bear on a real-world problem. By training a 16-billion-parameter model on enormous amounts of program code and corresponding descriptions written in natural language, the model developed a surprisingly sophisticated understanding of how the two relate. And the result feels almost magical: the ability to accurately translate a casually-written summary of an entirely new problem—including a universe of ideas the model hasn’t seen before—into the code that implements it.

Another standout project is CTRLsum, which automatically condenses long stretches of text into summaries of their most relevant points, drastically reducing the user’s information consumption burden. If CodeGen extends our ability to create as the world grows more complex, CTRLsum promises to help us to keep up with it in the first place.

Text summary is a long-standing problem in AI, but foundation models have brought it closer to real-world use than ever before. Even more encouraging are the results of integrating a collection of large-scale models, each trained in different ways, to form a single tool that isn’t just accurate in its understanding of natural language, but flexible and subject to precise control. In the case of CTRLsum, that means an interface that accepts not just an input document, but a series of keywords describing the particulars of the user’s interests and even an estimate of the desired length, helping the model guide its summary in the most personally relevant direction. Given a newspaper’s coverage of sports or pop culture, for instance, the user may specify a favorite athlete or musician that the summary should emphasize above others. An academic, on the other hand, may provide a collection of journal articles and ask that the summary focus on each study’s results.

In addition to its emphasis on personalization, CTRLsum can even answer questions, furthering its ability to extract relevant facts quickly. Consider, for example, the minutes of a meeting among event planners, from which the user needs only a couple details. To save time, they may opt to forgo a summary altogether and simply ask the name of the proposed venue, or a list of the expected attendees. As pressure mounts for all of us to keep up with more and more, from news to social media to our own correspondence, we may soon wonder how we survived without tools like this.

Finally, among the many things foundation models do well, its multimodal capabilities might be what I find most compelling—that is, those that fluidly translate between text, imagery, video, and other forms of content. A great example is the LAVIS library, so-named because of its capacity for understanding both LAnguage and VISuals. It arms developers with powerful functions to quickly build intelligent tools that naturally straddle the line between different media.

In one of our early demonstrations, we used LAVIS to build a tool for answering questions about imagery—reasoning, in essence, about visual content using natural language. Given a selfie, we asked “In which country was this picture taken?” and were instantly given the correct answer: “Singapore.” Although selfies don’t always provide enough background information to make such a question answerable, this particular example prominently featured the famous Marina Bay Sands in the background—a widely-recognized architectural attraction. Impressively, LAVIS identified the landmark, understood the nature of the question, and leveraged a connection between the two to synthesize a useful response.

The mind reels at the thought of how such multimodal intelligence can be applied in practice, and we’re still only scratching the surface. One immediate possibility, however, dear to the heart of many of our customers, is ecommerce. The relationship between text and imagery is fundamental to online retail, whether it’s the descriptions of items in a catalog, customer questions about texture, design, and color, or simply a search query. LAVIS can make all of these experiences deeper and more efficient: automatically generating captions for photos of garments, answering a question about a dining table’s finish, or providing more detailed search results than ever before.

Finally, there’s visual content generation, in which imagery isn’t merely analyzed, but created from scratch. Like CodeGen, such tools turn natural language descriptions into results that previously required hard-won expertise and technical knowledge, lowering creative barriers to entry alongside technical ones. Recent months have seen the headlines dominated by the sudden emergence of AI-generated photography and artwork, and we believe applications across the enterprise are waiting to be explored.

Although still in development, we’re confident that technologies like these are glimpses of a dramatically different world—one in which professionals of all kinds can keep up with the demands of rapidly accelerating industries with tools that intelligently automate the aspects of their jobs that have become routine, while novices are empowered to solve entirely new problems on their own.

Ethics and safety

No discussion of conversational AI would be complete without acknowledging the unique questions of ethics and even safety it presents. A conversational exchange can take on virtually limitless forms, thanks to the fluidity of grammar and the interpretive nature of phrasing, making even a rudimentary conversational AI an unusually complex system. Validating such a system—that is, ensuring it behaves as intended, and identifying the circumstances under which it might fail to do so—is far from straightforward. But it’s also essential; given how intimate a role this technology is likely to play in our future, it must be built on a foundation of transparency and trust.

For one thing, the evolution of intelligent tools intersects with many of the most pressing questions of the moment, bias and fairness chief among them. How can we build conversational AI that treats an entire world of users with the same level of efficacy and respect? How can we teach it to gracefully navigate global divides—not just in language itself, but in the layers of culture, tradition, and societal expectations that surround it? Words don’t exist in a vacuum, after all, and true comprehension depends on much more than their dictionary definitions. Conversational AI will have to recognize this, just as we do.

Closely related, and equally urgent, is the matter of safety. Given the subjectivity of language, which sometimes confuses even human speakers, we’ll need powerful benchmarks and validation metrics for quantifying an AI’s ability to parse it accurately and predictably, alongside well-defined safeguards against unwanted courses of action. As exciting as the possibilities of conversational workflows are, we must be equally passionate about minimizing their potential to cause harm, even unintentionally.

No single solution will address all of these concerns, but meaningful steps in the right direction can be taken, even now. One is the embrace of the increasingly popular multi-stakeholder approach, in which a diverse and representative group of contributors is convened to bring a broader range of perspectives to the development, testing, and deployment of this technology. Another is supporting research into explainability: AI that can engage in a kind of introspection, shedding light on the reasoning behind its predictions, inferences, and decisions. These topics have been hotly discussed for years, and I’m optimistic that the evolution of conversational AI will spur progress on both fronts.

Finally, there’s the philosophical question that looms over the future of AI in general, and conversational AI in particular: the ultimate role of human users. No matter how fast, efficient, and automated that trip to Saturn becomes, the most important factor will always be the benefit it delivers for the passenger. So while the ship’s instrument panel may be simplified, and one day gone entirely—along with the traditional interfaces of our apps and devices—our sense of control must be preserved.

Thankfully, it could be argued that conversational AI is uniquely suited to deliver on that promise, as it’s inherently dependent on human participation. Far beyond merely keeping us “in the loop,” natural language interfaces can’t function without the input of our ideas, wishes, and contributions, an understanding of which is channeled directly into action. In that sense, I believe they stand to empower us like few other technologies can.

Conclusion

AI is enabling a fundamentally new class of solutions to some of the most vexing problems of the modern world. It’s extending our ability to consume information, accelerating our productivity, revealing hidden meaning within data, and even augmenting our artistry. But to truly reap its value, these capabilities must be accessible through an experience so intuitive that we can express ideas effortlessly, work collaboratively, and stay in control from start to finish.

Conversational AI is that experience. It delivers the extraordinary power of large-scale machine learning through the natural language we already use in daily life, asking virtually nothing from us even as it puts transformative capabilities at our fingertips—the ultimate balance of power and accessibility. By freeing us from menial tasks, what’s left is the work that taps into our humanity: our vision, our creativity, and the unique perspectives that make each of us who we are.

Special thanks to Alex Michael for his contributions to both the writing and graphics in this piece.