Applying AI Ethics Research in Practice

February 2020

Summary from FAccT 2020 CRAFT Session

AI Ethics practitioners in industry look to researchers for insights on how to best identify and mitigate harmful bias in their organization’s AI solutions and create more fair or equitable outcomes. However, it can be a challenge to apply those research insights in practice. On Wednesday, January 29th, 2020, Yoav Schlesinger and I facilitated a CRAFT session on Bridging the Gap Better AI Ethics Research and Practice at FAccT 2020 bringing together practitioners and researchers to discuss these challenges, identify themes, and learn from each other. This is a summary of the insights we gained in the brief 90 minute session.

Industry perspectives

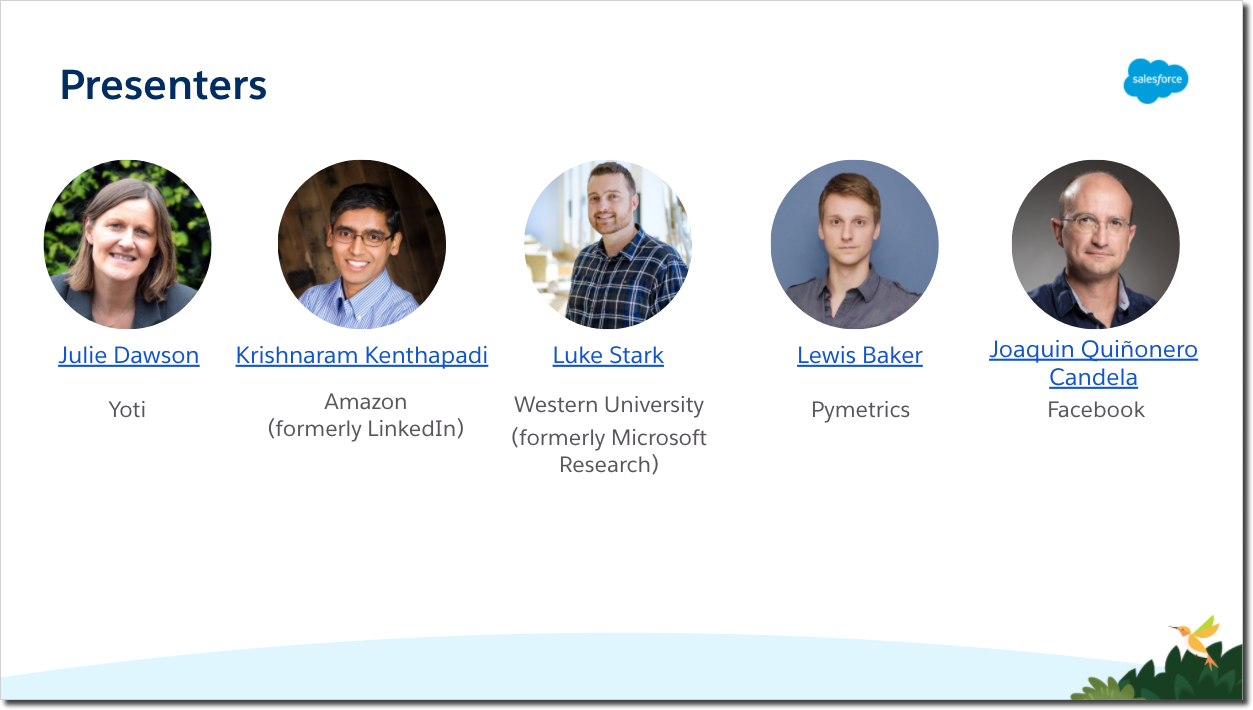

Five industry practitioners (Yoti, LinkedIn, Microsoft, Pymetrics, Facebook) shared insights from the work they have undertaken in the area of fairness in machine learning applications, what has worked and what has not, lessons learned and best practices instituted as a result. After the presentations, attendees discussed insights gleaned from the talks. There was an opportunity to brainstorm ways to build upon the practitioners’ work through further research or collaboration.

“Fairness is a process”

As Joaquin Candela posited in this workshop: “Fairness is a process.” His statement aptly summarizes the theme of the presentations and discussions. Rather than a one-size fits all approach or one-time action, fairness assessment and enablement is an ongoing process of considering different fairness definitions, evaluating the impact of selecting one over another, and iterating to maximize the intended type of fairness. Critical to this process is picking a point of departure - with more than 21 operational definitions of fairness, it is incumbent on teams to make a best faith effort to select one. Refusing to allow the perfect be the enemy of the good, teams should operationalize against their selected definition. Fairness reviews, auditing, and documentation should be included in Product Requirements Documents (PRD), Global Requirements Documents (GRDs), Launch Reviews, and requests for computing resources. According to Luke Stark, this all requires leadership, guided procedures, and resources to support adoption.

Additional insights:

1. Articulate, challenge, and document the problem and goals

It may seem obvious, but it is critical to begin by documenting the problem that needs to be solved (e.g., lack of diversity in search results for hiring talent, age discrimination) and the goal you are trying to achieve (e.g., search results should reflect gender distribution of all people who match the query, accurate age estimation while preserving privacy). Challenge underlying assumptions being made -- are the right questions being asked and are the right constructs being measured? Too often we aren’t solving the problem we think we are solving.

2. Identify metrics for success and plan to iterate

Identifying metrics for success at the beginning of a process avoids moving the goal line or being unclear about the impact of the solution (e.g., no change in business success metrics, improvement in accuracy of age prediction). Performance must be measured over time and there should be plans to iterate. There is also a clear need to document and remind stakeholders of past impact on specific communities and how it will influence the solutions attempted. To be successful at creating impact requires that organizations create and enforce incentive structures by offering both rewards and consequences for decisions.

3. The best solution may not be legal

Unfortunately, the most predictive or accurate definition/application of fairness may not be legal in regulated industries. For example, post-fact analysis (post-processing) that boosts individual candidates can be an attractive solution, but it is illegal in hiring. The challenge presented then is that while it may be safer to do balancing in model design, it can also be less effective. An additional concern expressed is that collecting data needed for making fairness assessments creates a liability. Sensitive attributes in ranking -- as LinkedIn’s research showed -- is possible and legally “safe,” whereas measuring actual denial of opportunity is much riskier. Differences in laws around the world adds complexity.

4. AI can be better than humans

We are extremely aware of the potential for bias and harm in AI systems; however, it is important to remember that there are things at which humans can be lousy, like deciding who to interview and hire (e.g., women and minorities ~50% less likely to be invited to a job interview) or estimating age (e.g., root mean squared error for humans is 8 years vs. 2.92 years for Yoti). The people involved in the work presented all demonstrated that they care deeply about creating more fair systems and that their systems are making progress to achieve greater fairness.

Additional Resources from Presenters

Julie Dawson, Yoti:

Julie described how Yoti, a scaling digital identity company, has developed an age estimation approach, with ethical scrutiny baked in along the way. [video]

The age estimation approach is being used in a range of contexts, from safeguarding teens online accessing live streaming social media site, to adults purchasing age restricted goods at a self checkout. In each instance the image is deleted, no data is retained.

Julie described how Yoti has held workshops and invited a wide range of civil society, regulators and academics to kick the tyres and give feedback. It has iterated and produced 5 versions of a white paper, refreshing it each few months, as the algorithm’s accuracy improves. Yoti outlines how they built the algorithm, how people can opt out, publishing clearly the accuracy across ages, biological sex and skin tones, as well as the false positives, the buffers.

Yoti described the review of algorithm impact by IEEE expert Dr Allison Gardner (to let a lay person know that an expert had looked at the numbers and detailed data) and healthy challenges we got from her - and what steps that has nudged us to take.

Yoti outlined the steps it took to build its ethical framework, from devising principles, to setting up an external ethics board (with representatives from human rights, consumer rights, last mile tech, accessibility, online harms) - minutes and terms of references published openly and becoming a benefit corporation BCorp, scaling profits and purpose in parallel. In addition it has evidenced its commitment to the Biometrics Institute principles, to the Safe Face Pledge and to the 5 rights- to enable Children and Young People to Access the digital world creatively, knowledgeably and fearlessly.

Luke Stark, Microsoft Research:

MSR CHI 2020 paper on fairness checklists is available for now at http://www.jennwv.com/papers/checklists.pdf

Lewis Baker, Director of Data Science, Pymetrics:

Pymetrics builds predictive models of job success that are tailored to specific roles at a company. This presentation focused on how pymetrics incorporates fairness into every stage of their process. To begin, we discussed how the status quo for hiring and selection is quite poor; to ignore the problem is to commit to a process that has seen a nearly 2:1 opportunity rate for white men above women and minorities [Quinlan et al, 2017, PNAS]. Beyond this, traditional selection tools such as informal interviews and resume review have poor predictive validity to actual job performance [Schmidt & Hunter, 1998, Psych Bulletin]. For this reason, failure rates of employees can approach 50% within the first 18 months after hire [Murphy, 2012, Hiring for Attitude].

Lewis presented how training data is assessed for fairness by experts in the field with consultation with subject matter experts in the role being modeled. All features in the model are curated to contain minimal differences between protected groups (e.g., age, ethnicity or gender). However, in a real-life example of Simpson’s Paradox, it is possible to find bias in a success model for salespeople in Ohio, even if the same model would not have bias on a global population. Pymetrics addresses this auditing all models for fairness using their open-source toolkit, audit-AI.

Lewis focused on two practicalities faced by Pymetrics: First, there are many alternative metrics for fairness, but statistical parity is the most conservative metric of success that is defined in legal precedent. Until case law supports another metric, clients will steer us there. Second, popular methods of reducing bias (like most post-processing steps published by IBM's AI360) are great in concept, but are actively illegal according to US hiring regulations.

Krishnaram Kenthapadi, Amazon:

The work presented on fairness-aware reranking for LinkedIn talent search was announced at LinkedIn's Talent Connect'18 conference [LinkedIn engineering blog; KDD'19 paper]. The key lesson is that building consensus and achieving collaboration across key stakeholders (such as product, legal, PR, engineering, and AI/ML teams) is a prerequisite for successful adoption of fairness-aware approaches in practice.

Summary: Motivated by the desire for creating fair economic opportunity for every member of the global workforce and the consequent need for measuring and mitigating algorithmic bias in LinkedIn’s talent search products, we developed a framework for fair re-ranking of results based on desired proportions over one or more protected attributes. As part of this framework, we devised several measures to quantify bias with respect to protected attributes such as gender and age, and designed fairness-aware ranking algorithms. We first demonstrated the efficacy of these algorithms in reducing bias without affecting utility, via extensive simulations and offline experiments. We then performed A/B testing and deployed this framework for achieving gender-representative ranking in LinkedIn Talent Search, where our approach resulted in huge improvement in the fairness metrics without impacting the business metrics. In addition to being the first web-scale deployed framework for ensuring fairness in the hiring domain, our work forms the central component of LinkedIn’s “diversity by design” approach for hiring products, and thereby helps address a long-standing request from LinkedIn’s customers: “How can LinkedIn help us to source diverse talent?”

Bridging the gap between research and practice (i.e., lessons learned in practice):

- Post-Processing vs. Alternate Approaches: We decided to focus on post-processing algorithms due to the following practical considerations which we learned over the course of our investigations. First, applying such a methodology is agnostic to the specifics of each model and therefore scalable across different model choices for the same application and also across other similar applications. Second, in many practical internet applications, domain-specific business logic is typically applied prior to displaying the results from the ML model to the end user (e.g., prune candidates working at the same company as the recruiter), and hence it is more effective to incorporate bias mitigation as the very last step of the pipeline. Third, this approach is easier to incorporate as part of existing systems, as compared to modifying the training algorithm or the features, since we can build a stand-alone service or component for post-processing without significant modifications to the existing components. In fact, our experience in practice suggests that post-processing is easier than eliminating bias from training data or during model training (especially due to redundant encoding of protected attributes and the likelihood of both the model choices and features evolving over time). However, we remark that efforts to eliminate/reduce bias from training data or during model training can still be explored, and can be thought of as complementary to our approach, which functions as a “fail-safe.”

- Socio-technical Dimensions of Bias and Fairness: Although our fairness-aware ranking algorithms are agnostic to how the desired distribution for the protected attribute(s) is chosen and treat this distribution as an input, the choice of the desired bias / fairness notions (and hence the above distribution) needs to be guided by ethical, social, and legal dimensions. Our framework can be used to achieve different fairness notions depending on the choice of the desired distribution. Guided by LinkedIn's goal of creating economic opportunity for every member of the global workforce and by a keen interest from LinkedIn's customers in making sure that they are able to source diverse talent, we adopted a “diversity by design” approach for LinkedIn Talent Search, and took the position that the top search results for each query should be representative of the broader qualified candidate set. The representative ranking requirement is not only simple to explain (as compared to, say, approaches based on statistical significance testing), but also has the benefit of providing consistent experience for a recruiter or a hiring manager, who could learn about the gender diversity of a certain talent pool (e.g., sales associates in Anchorage, Alaska) and then see the same distribution in the top search results for the corresponding search query. Our experience also suggests that building consensus and achieving collaboration across key stakeholders (such as product, legal, PR, engineering, and AI/ML teams) is a prerequisite for successful adoption of fairness-aware approaches in practice.